Introduction

3D technologies are a versatile resource, valuable both for the enhancement of cultural assets in contexts such as museums or through XR applications, and as fundamental tools in documentation and research. In recent years, their application in the dissemination of cultural heritage has further deepened the relationship between the GLAM sector (galleries, libraries, archives, and museums) and the gaming industry, highlighting the need for different approaches to data processing. Models created for documentation must achieve high resolution and data density to ensure accuracy, while those intended for Web3D or XR environments require processing and optimisation in ways similar to the creation of video game assets, so that they can perform effectively in virtual contexts.

Achieving high visual quality and a natural perception requires assets to provide a complete representation of the physical object. While gaps may be acceptable in documentation models for reasons of transparency, they undermine visual credibility and usability in dissemination contexts. When a 3D model must address both aims — visual credibility and transparency — the integrated or corrected areas should therefore be explicitly identified and documented. In this paper, visual credibility is defined as the degree to which a 3D model is perceived as complete, coherent, and plausible by the viewer in dissemination contexts.

This duality highlights the need for structured workflows that can balance both goals, ensuring scientific reliability while maintaining high visual impact.

The pipelines borrowed from 3D authoring in gaming significantly expand the potential for visually credible rendering in Web3D environments, enabling lightweight models that can be streamed on any device without requiring dedicated software. When addressing non-specialist audiences, the visual appeal of digital objects is crucial for engaging the public; this can be achieved through processes that prioritise performance over accuracy. In this sense, visual credibility is often pursued through perceptual optimisation strategies rather than through strict geometric or material accuracy, which weakens their value for documentation purposes.

Furthermore, despite the growing use of 3D technologies in archaeology and museums to study, disseminate, and enhance cultural heritage, the lack of a standardised and shared methodology for the processing of acquired data gives rise to concerns and poses challenges of accessibility, transparency, and interoperability in the research domain (Goodman et al., 2016; Peels & Bouter, 2018; Barzaghi et al., 2024a). This issue had already been raised in the London Charter (Beacham et al., 2006; The London Charter, 2009), which stressed that paradata is essential for clarifying the decision-making process behind visualisations; without it, their accuracy and reliability cannot be properly evaluated. This becomes particularly critical when dealing with 3D data manipulation and authorial interventions in 3D reality-based techniques, such as photogrammetry and 3D scanning, carried out to fill holes caused by acquisition constraints (ICOMOS, 2017).

Despite significant advancements, the lack of standardised, transparent workflows that integrate visual credibility and scientific rigour remains a key challenge in the digital documentation of cultural heritage.

This contribution introduces a defined pipeline designed to deliver visually credible results for dissemination purposes, while adhering to FAIR principles, emphasising reusability, sustainability, and openness to develop the digital twin (see Barzaghi et al., 2024b and Barzaghi et al., 2025) of the temporary exhibition “The Other Renaissance: Ulisse Aldrovandi and the Wonders of The World” held in Bologna and the digitisation of specimens at the Geological Collection of the Giovanni Capellini Museum, University of Bologna, as part of the CHANGES project (Thematic Spoke 4: Virtual Technologies for Museums and Art Collections). Based on these premises, this article aims to address two complementary research questions:

RQ1: Is it possible to achieve an acceptable level of visual credibility for the GLAM sector in Web3D applications, while adhering to the FAIR principles of reusability, sustainability, and openness?

The project focused on the digitisation of hundreds of complex artefacts, necessitating the development and implementation of a thorough methodology.

RQ2: In the context of such a workflow, where dissemination potential and scientific value should be simultaneously pursued, how can transparency related to the reliability and authenticity of the data be improved?

This paper presents three selected case studies from both the Aldrovandi exhibition and the Capellini Museum. Our framework incorporates a seminal paradata-based approach (Apollonio & Giovannini, 2015; Bentkowska-Kafel & Denard, 2016), providing a detailed example of its practical implementation. This method aims to enhance 3D object reconstructions by increasing their informational complexity, integrating metadata that conveys the certainty and quality of the data alongside geometric and texture details. Some parts of the methodological description are adapted from our previous works (see Barzaghi et al., 2024b; Barzaghi et al., 2025; Ammirati et al., 2025).

State of the Art

The photogrammetric acquisition process in a museum setting is often affected by several factors that can compromise the quality of the results, such as restricted spaces, poor lighting conditions, and the presence of reflective or translucent surfaces on the artifacts. In such cases, controlling the ambient light to minimize reflections and unwanted shadows becomes essential, which requires a thorough inspection of the environment and, in some instances, the use of supplementary artificial lighting and specific equipment (Nicolae et al., 2014). However, in real operating contexts, this condition is rarely achievable (Balzani et al. 2024). GLAM collections are known to be highly heterogeneous, frequently including objects that are difficult to capture, either because of their geometric complexity or because they are made of non-collaborative materials such as metals or shiny surfaces (Ministero della Cultura, 2025). Apollonio et al. (2021) distinguish between “extrinsic” and “intrinsic features” that determine the complexity of acquisition processes. Among the extrinsic features, three factors are particularly significant: (i) spatial distribution (SD), (ii) topological complexity (TC), and (iii) boundary conditions (BC), namely the availability of surrounding working areas free of obstacles.

With regard to intrinsic features, the authors emphasize: (i) the D/d ratio, where D corresponds to the diagonal length of the bounding box enclosing a collection piece and d to the average vertex spacing; (ii) surface characteristics, including the degree of surface detail, textural properties, and reflectance behaviors; and (iii) the cavity ratio (CR), a parameter describing the degree of inaccessibility of certain regions of the object to digital acquisition. These are all factors that can guide the operator to the right techniques and lead to an ideal acquisition, and as a result should not generate holes, as completeness represents a requirement distinct from accuracy. This is even the case in the field of metrology, where it is measured by a specific indicator that complements more common metrics (Nocerino et al., 2020). Constrained accessibility hinders comprehensive surface sampling, consequently leading to the formation of holes within the digital reconstruction. In the case of reflective or translucent properties of materials yield ambiguous data, which may result in distortions, alongside holes, that are incompatible with the object’s actual morphology. Such errors are often irremediable and are frequently removed during processing, resulting in incomplete reconstructions.

In both cases, holes in the mesh ultimately derive from unsampled or insufficiently sampled areas of the surface. Depending on the reconstruction algorithm, these gaps may either remain explicit, as in Delaunay-based methods, or be interpolated, as in standard Poisson and other volumetric approaches (Sulzer et al., 2024), where low-confidence polygons are generated and must subsequently be removed before proceeding with the correction. In the absence of reliable surface samples from which to reconstruct these areas, the process of filling the gaps necessitates the use of inference, with the intervention of expert operators providing the necessary support. The reconstruction of the missing regions is based on surrounding geometry and secondary or indirect documentation, including visual inspection, supporting photographs, or images from the dataset that could not be aligned in photogrammetric pipelines. This process introduces new geometry in a critical yet fundamentally inferential manner, as it is not grounded in direct data acquisition. Altough the resulting object, once appropriately optimised, appears complete and can serve as a digital representation of the physical artefact, it does not allow users to distinguish between geometries based on reliable data and those reconstructed by the operator (disregarding metrological considerations). While such a distinction is of little consequence in the video game industry, where three-dimensional pipelines prioritise aesthetic results, in the GLAM sector it carries significant implications for documentation, which must be safeguarded and guaranteed even for three-dimensional models intended primarily for dissemination, and not solely for those created for research and analytical purposes.

In the field of Virtual Reality applied to Cultural Heritage (VR for CH), there is a notable lack of shared standards for documenting integrations and reconstructions, only a few approaches explicitly expose authorial interventions directly on the 3D geometry through visual encodings suitable for Web3D dissemination. This section offers a focused, non-exhaustive overview of representative approaches on this topic, and frames our contribution within this landscape.

Mapping models using existing ontologies, such as CIDOC-CRM and related extensions, have been proposed to document the planning and creation of 3D models with provenance and paradata (for selection of papers on the topic, see: Pitzalis et al., 2010; Bruseker et al., 2017; Niccolucci & Felicetti, 2018; Catalano et al., 2020; Amico & Felicetti, 2021; Pamart et al., 2023; Bajena, 2025); these approaches constitute an important basis, but do not yet define operational standards for the geometric tagging of intervention areas. The variety of workflows and the quality of documentation, therefore, remain unresolved critical issues, requiring a common framework for the FAIR management of versions, metadata and paradata throughout all phases. One potential direction for research development is the application of Deep Learning-based segmentation and semantic labelling techniques. These techniques have proven to be effective in contexts characterised by recurring types and homogeneous training datasets (Muhammad Yasir & Ahn 2022). However, they are not easily generalisable to the heterogeneity typical of GLAM collections. Consequently, these approaches do not yet guarantee direct integration with ontologies.

In the context of methodologies based on semantic annotation for virtual reconstructions, Apollonio (2015) introduced the Virtual Reconstruction Information Management Modeling (VRIMM) framework, designed to systematically manage and document the evidential and interpretative processes underlying digital reconstructions. Later, Foschi et al. (2024) expanded on this approach by developing a gradient-based colour scale that visually represents, for each portion of a digitally reconstructed 3D model, the quality and reliability of the sources employed. Together, these contributions align with the transparency and documentation principles promoted by the London Charter and the Seville Principles. A similar yet different methodology is the Extended Matrix method (Demetrescu, 2018). The proposed methodology transposes the stratigraphic method (used in excavations) to the domain of virtual reconstructions by employing the same principle as the stratigraphic method (Matrix) in archaeology, allowing all sources utilised and hypotheses formulated to be traced and documented. The application of a colour scale enables the visualisation of data directly on the reconstructions, thereby illustrating temporal and logical relationships, in addition to the integration of components into the model and the utilisation of in situ elements. Furthermore, the integrated toolset included in the Extended Matrix Framework (EMF), provides both the technology for linking the 3D model with the knowledge graph of the EM and to visualise the parts on EMviq, a dedicated viewer built on the ATON framework (Fanini et al., 2021). The Extended Matrix is a system that guarantees transparency and reusability of the reconstruction record in future research. It also promotes standardisation in the publication of models (which is also useful for virtual museums and digital libraries). Furthermore, it allows for the management of alternative reconstruction hypotheses and aligns with semantic and metadata standards (Demetrescu, 2018; Demetrescu & Ferdani, 2021). However, both VRIMM and EM are presented here as illustrative examples of methodologies that employ semantic annotations combined with gradient-based colour scales. It should be noted that neither approach was applied in our case studies.

Presently, human intervention remains widely recognized as imperative for the processing of 3D models, particularly in instances where substantial corrections or the integration of gaps is necessary. However, such intervention is rarely documented beyond the form of generic textual metadata associated with the model as a whole, without a direct link to the geometry. From a FAIR perspective, the initial requirement is to descend to the level of geometry and define methodologies, operational actions and tool sets that allow 3D surfaces to be semantically segmented and directly tagged. This ensures transparency, traceability and reuse of data (Giovannini, 2018; Croce et al., 2020; Amico & Felicetti, 2021; Demetrescu & Ferdani, 2021; Barzaghi et al., 2024a).

Materials and Methods

The methodology presented in this paper was developed within the Spoke 4 of the CHANGES project. Specifically, one pilot activity involved the development of a digital twin for the temporary exhibition “The Other Renaissance: Ulisse Aldrovandi and the Wonders of the World,” which was held at the Poggi Palace Museum between December 2022 and May 2023. It was dedicated to the naturalist Ulisse Aldrovandi. The second effort was carried out at the Giovanni Capellini Geological Museum, which is part of the University of Bologna’s museum network. During the two campaigns, 301 and 87 objects were digitised for the Aldrovandi exhibition and the Capellini Museum, respectively. In both cases, the creation and management of 3D data and metadata were conducted in accordance with the FAIR principles (Barzaghi et al., 2024b) to establish a methodology that can be replicated and applied across different contexts (Barzaghi et al., 2024b; Barzaghi et al., 2025). The high number of Cultural Heritage Objects (CHOs) to digitise, their variety in terms of shapes, surfaces, and scales and the constraints of the exhibition’s limited timeframe and physical space were the main challenges to face, resulting many times in inaccuracies and holes in the processed meshes. Addressing the issues due to these conditions involved a combination of semi-automated processes and manual interventions, introducing some subjectivity and authorial actions. The raw acquisition data should not be interpreted as inherently free from inaccuracies. Factors such as acquisition geometry, lighting conditions, surface properties, and time constraints can introduce noise, misalignments, and local geometric uncertainty already at the data capture and processing stages. To address this, processing reports produced by photogrammetry software (e.g., Metashape and 3DF Zephyr) can be preserved and made accessible, providing quantitative accuracy indicators that complement the processed models. In this context, our research output provides a methodological framework for data transparency, prioritising the preservation of three distinct versions of each 3D model derived from the raw acquisition data (RAW):

1. Processed Raw Data (RAWp): the first output from photogrammetry or scanning software for data processing, only automatic and semi-automatic interpolations, no geometric corrections, the output is a model with maximum accuracy, usable as a research tool, raw data preserved, the main use is for Raw data archive, academic and scientific research, study and digital preservation.

2. Digital Cultural Heritage Object (DCHO): a complete model after resolving geometry issues, the applied interventions are hole-filling and topological correction during the 3D modelling phase using computer graphics software. The output is a hole-free, high-resolution model; the main use is for dissemination and valorisation purposes.

3. Optimised Digital Cultural Heritage Object (DCHOo): obtained during the optimisation phase to generate a further performant 3D model version designed for real-time interaction on web-based platforms (ATON framework was adopted).

Each stage in the pipeline, from acquisition to web-based publication, was carefully documented to ensure transparency and reproducibility (Barzaghi et al., 2024a; Barzaghi et al., 2024b; Barzaghi et al., 2025). Storing all derivative versions (RAWp, DCHO, and DCHOo) allows for direct comparison, offering a clear record of the authorial decisions and interventions made during the modelling process. This structure and combination of formats enable the traceability of both geometric and appearance-related interventions. In line with the goal of visual credibility, appearance-related adjustments are documented through versioning and the preservation of intermediate assets, rather than through explicit descriptions of physical material behaviour. The authorial aspect of reconstruction echoes the challenges of integrating missing portions of artefacts into virtual archaeological models, highlighting a shared methodology that can be adapted across domains to enhance reliability and applicability in diverse contexts. Accordingly, the pipeline prioritises perceptual coherence over the explicit modelling and documentation of material properties, which remains outside the current scope.

It should be noted that this intent does not, by design, address the metrical accuracy of the models or the confidence associated with the specific repair operations applied to the mesh. The proposed pipeline is intentionally agnostic with respect to these aspects, which we recognise as crucial yet still insufficiently addressed in the literature. Rather than attempting to evaluate them directly, the workflow aims to contribute to this issue by providing a structured method and set of tools that make such interventions explicitly observable on the models, prior to any subsequent assessment or validation.

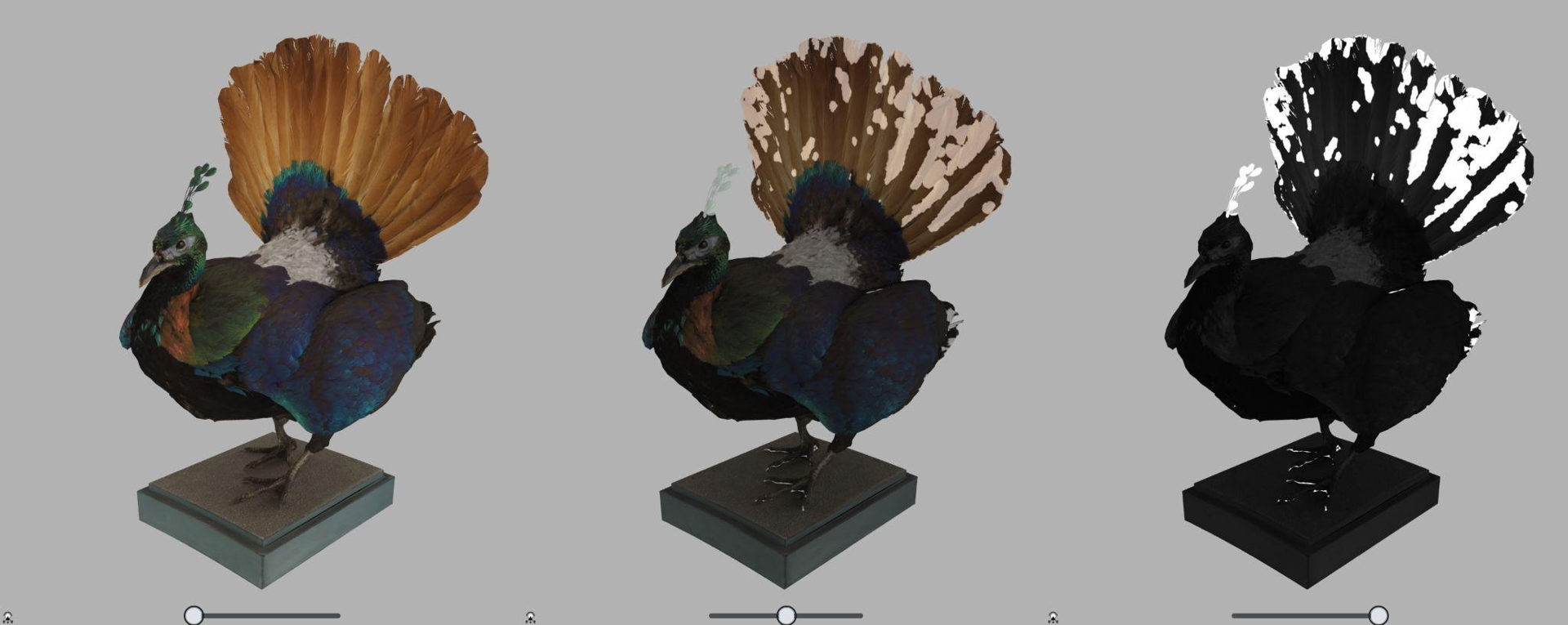

The developed workflow ensured a balance between visual credibility and data interpretability through the associated semantic layer, while generating optimised 3D models whose textures, lighting, and geometry preserve the perceptual qualities of the physical artefacts in web-based dissemination. The level of visual credibility was assessed through direct visual comparison with the original artifact and their picture and validated by feedback from curators involved in the digitisation process (Figure 1). Starting from this premise, we propose a methodology to enhance data transparency further, making it suitable for documentation purposes as well. Integrations or topological modifications between the different versions of 3D models were highlighted through the use of false-colour maps. This kind of visualisation is useful to document the interventions, thereby providing transparent records for comparison and alternative visualisations. Future developments in material capture and authoring tools are expected to raise new challenges for the documentation of appearance, representing an important direction for further methodological refinement.

Figure 1 - A comparison of the photo of the object and the model rendering in ATON

In a recent article (Ammirati et al., 2025) we presented a complementary yet distinct methodology through the creation of a technique that enables the retrospective detection of geometric modifications by deriving a semantic map through ray projection, highlighting areas where the raw and corrected versions of the same model exceed a predefined threshold (Refinement Trace Map). Since this constitutes a post-hoc methodology that is software-independent, its flexibility enhances data transparency and supports documentation across different domains and software environments. However, this ex-post methodology presents both methodological and technological limitations, as it remains agnostic to the reason and nature of the interventions applied to the mesh, merely detecting differences between the original and the corrected models. From a technological standpoint, noise and small-scale geometric features may affect the accuracy of ray projection, potentially leading to false results.

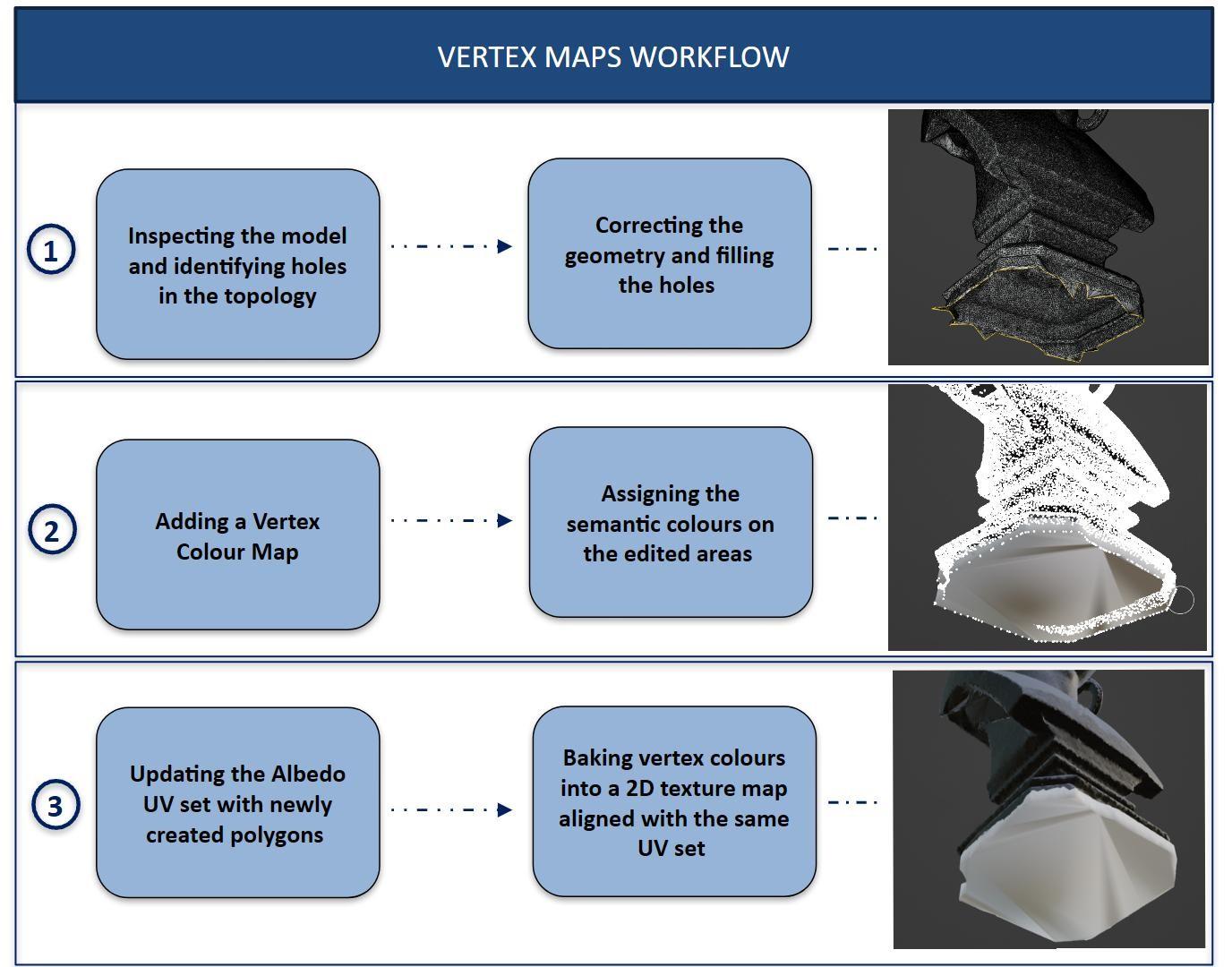

In contrast, the present work proposes recording corrective interventions at the time of their application, thereby eliminating any ambiguity and potentially enabling the annotation to be specialised according to the type of intervention performed, thus improving both its accuracy and reliability. The process is carried out directly within the modelling environment (e.g., Blender), by appropriately encoding a Vertex Colour map on the vertices of the mesh affected by each intervention as it is executed. Once all interventions have been completed, the Vertex Colour map is then baked into a 2D texture map that shares the same UV set as the albedo, thereby generating the false-colour map (Figure 2). This enables the diffuse texture to be displayed in overlay mode within Web3D viewers, generating a false-colour map that highlights mesh repairs.

Figure 2 - Diagram illustrating the workflow for creating Vertex Colour Maps in 3D environments

Case studies

To validate the proposed methodology, three illustrative case studies were selected, each presenting characteristics that are likely to generate deficiencies in the resulting geometry (Figure 3). Specifically, the objects are distinguished by: (a) complex morphological features, (b) reflective surface properties, and (c) immobility, which prevents repositioning during acquisition. While the proposed methodology is designed to be general and adaptable to a wide range of photogrammetric and 3D reconstruction contexts, specific software and tools were employed in each case study, which will be illustrated in the following sections.

Figure 3 - The three case studies described in this paper: a) the Teriaca Vase, b) the Dschagga woman bust; c) the Glyptodon Jaw

CS1: The Teriaca Vase

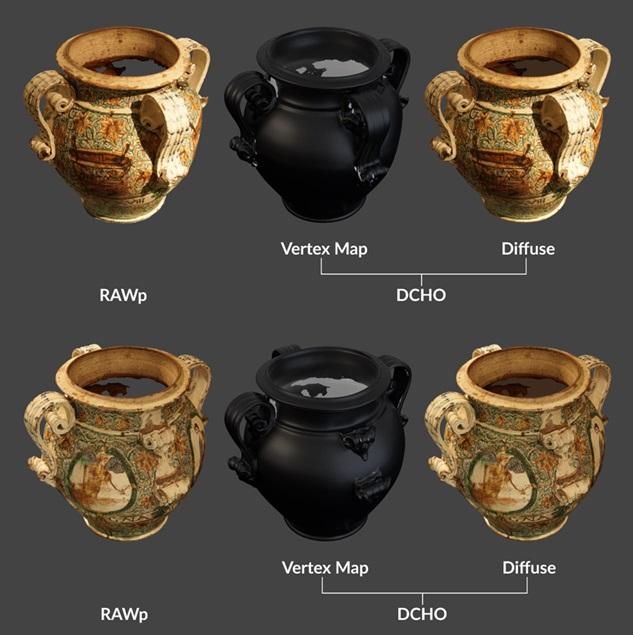

Applying the process to the Teriaca Vase (Sullini, 2025) (Figure 4) enabled the creation of a lightweight and efficient 3D asset optimised for Web3D platforms. It is a late 18th-century jar, preserved at the Medieval Civic Museum in Bologna, that was used to store the antidote teriaca, which was used against snake bites. The vase was acquired by using a photogrammetric workflow using a mirrorless Sony A7 I (ISO 800, f/20, 2 sec. exposure, 60 mm focal length), mounted on a tripod. It is worth noting that the item could not be moved and was therefore acquired under the exhibition lighting setup. The manual retopology was carried out in close conjunction with the repair of the mesh, so that the reduction from millions to thousands of polygons simultaneously incorporated the critical decisions required to address gaps and inconsistent morphologies. This procedure ensured fine-grained local control over accuracy and topology, the latter conceived not merely as a rationalisation of the mesh but as a structure deliberately aligned with local geometric features, capable of preserving edges, curvatures and subtle morphological transitions that would otherwise be lost in an indiscriminate simplification

During this phase, the areas subjected to corrections and integrations were explicitly traced by means of a vertex colour map associated with the new topology, thereby establishing a record of the interventions to be retained across later stages of the workflow. The rationalised topology further enabled the generation of an effective UV set, which provided the common reference system for both material textures and semantic layers.

On this basis, the vertex colour information was eventually projected and baked onto a false-colour semantic map aligned to the same UV coordinates, making it possible to deploy this map as an alternative visualisation mode within the published asset. In this way, the semantic map highlights the regions modified during mesh repair and those affected by insufficient sampling in the texture projection, complementing the photorealistic appearance of the model with a transparent account of its inferential integrations.

Figure 4 - Comparison between the RAWp and DCHO with vertex colour map and diffuse of the Teriaca Vase

CS2: Dschagga woman bust

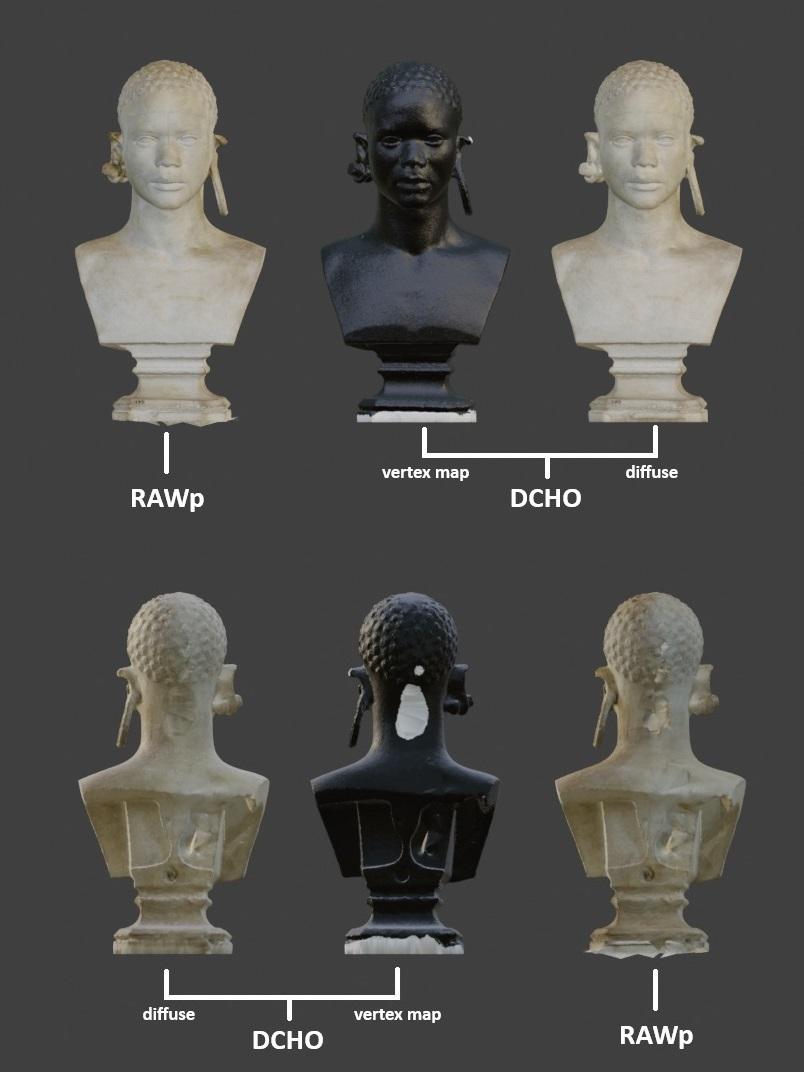

The Dschagga Woman (Kenya) (Rega, 2025) bust (Figure 5) is part of the Anthropology Collection of the University of Bologna Museum System. It was acquired using photogrammetry with a Sony Alpha 7 I camera (ISO 1000, f/10, 1/3 sec exposure; 59.00 mm focal length) and a lightbox setup. The bust, a plaster cast preserved at the Poggi Palace Museum in Bologna, was part of the exhibition dedicated to Ulisse Aldrovandi. The positioning of the object within the lightbox made it difficult to capture certain areas, such as the back of the head and the lower portion of the bust, due to limited camera access and the immobility of the object. The processing of photogrammetric data was carried out using the 3DF Zephyr software. The RAWp model was then exported and loaded into Blender, where manual intervention was applied to the missing parts.

The workflow began with the identification of errors and gaps in the geometry through close inspection of the model. In this case, issues were detected in the reconstructed area at the back of the head, at the tip of the left ear (the one with the round earring), as well as the presence of extra faces on the base and an open, non-manifold geometry requiring closure. These errors were subsequently corrected by carefully reconstructing the back of the head to restore consistency with the rest of the geometry, refining the tip of the left ear to achieve a more natural shape, and repairing the base of the bust. Once the missing parts were reconstructed, colour painting was performed using Vertex Colours as a masking tool to distinguish the modified areas.

This required the creation of a new Color Attribute in Blender (accessible under Data Properties), prepared for use as a mask. In Vertex Paint mode, the model is initially displayed in black, with the brush set to white to mask the reconstructed regions. After completing the colour painting, the process continues with baking the texture to generate a 2K resolution colour map, keeping the base model in black and the integrated areas in white.

Figure 5 - Comparison between the RAWp and DCHO with vertex colour map and diffuse of the Dschagga Woman bust

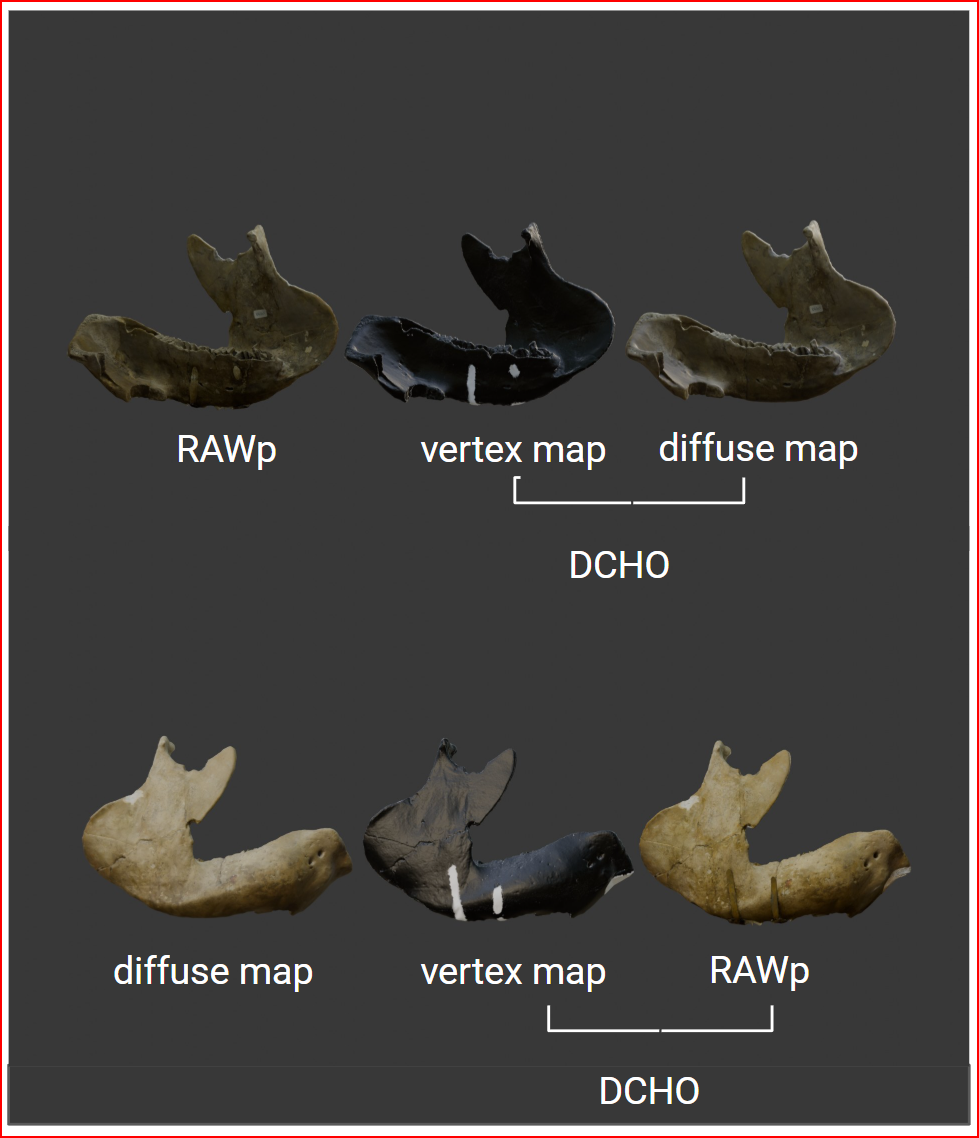

CS3: Glyptodon Jaw

The mandible of Glyptodon (Fabbri, 2025) (Figure 6) was acquired through photogrammetry using a Panasonic DMC-LX100 (ISO 400, f/2.5, 1/500 sec. exposure, 11 mm focal length). The specimen belongs to the Geology Collection of the Giovanni Capellini Museum, which is part of the University Museums Network of Bologna. Photogrammetry was conducted inside a lightbox, and the raw data collected were subsequently subjected to white balance correction. Photogrammetric processing was performed in 3DF Zephyr. The resulting RAWp model displayed a single gap in the anterior portion of the mandible. The model was then imported into Blender, where the support structure used during acquisition was manually removed, generating a second void in the mesh. Both gaps were subsequently addressed manually in Blender. To document the vertices added during operator intervention, these were selected and visually distinguished using the Vertex Color tool. In Vertex Paint mode, the model is initially rendered in black, with the brush set to white to mask the reconstructed regions. Upon completion of the colour painting, the workflow continued with texture baking to produce a 2K resolution colour map, in which the base model remains black and the integrated areas are shown in white.

Figure 6 - Comparison between the RAWp and DCHO with vertex colour map and diffuse of the Glyptodon Jaw

Results

The pipeline described in this paper was designed to produce outputs that meet the photorealistic requirements of a project with dissemination purposes. Efforts were made to utilise open formats and software at every step of the workflow, aligning with FAIR principles. For the long-term preservation of 3D data, the team selected Zenodo (https://zenodo.org/) as a final public repository, given its adherence to Open Science principles. Specifically, the 3D models were deposited in the obj, as this was the chosen format. Nonetheless, .obj proved to offer insufficient support for advanced features (Barzaghi et al., 2025), including vertex colour maps, whose support in .obj relies on non-standard extensions and is therefore not consistently interoperable. Consequently, future implementations will rely on the. fbx format, which is widely regarded as a de facto standard for the exchange of geometry and texture data in static 3D models. Although Zenodo is not a disciplinary repository and does not provide dedicated functionalities for 3D or Cultural Heritage data, it assigns persistent DOIs to all deposited items, supports generic high-level metadata standards (DataCite Metadata Schema, Dublin Core), and is widely recognized and supported by the research community. Its stability, broad accessibility, and institutional support make it practical and reliable as a final repository, particularly since it is already familiar to all project partners, independent of the institutions involved, and allows the creation of a community for the CHANGES Spoke 4 project, consolidating all outputs under a single umbrella rather than dispersing them across multiple repositories. While not an ideal solution for Cultural Heritage data, Zenodo currently represents the most effective approach within the Italian context, where dedicated disciplinary infrastructures are not yet available (Barzaghi et al. 2024a).

The published digitised DCHOo combines perceptual realism with low memory usage and quick Web3D loading, thanks to the optimisation of the geometry. It includes clear paradata to indicate integrated sections, enabling both casual users and specialists to explore the item and assess it before requesting raw data or the physical object for detailed study.

To enable the contextual visualisation of the completed model alongside its integrations, the Web3D TRACE tool was developed (Figure 7; https://aton.ispc.cnr.it/a/trace/). Built upon the open-source ATON framework created by ISPC-CNR (Fanini et al. 2021), TRACE was implemented as a custom application leveraging the framework’s “plug-and-play” architecture (Fanini et al., 2025). Both TRACE and ATON framework are released as open-source software, ensuring transparency, reproducibility, and long-term reusability. The framework is based on THREE.js, one of the largest open-source Web3D libraries, supported by a massive community. ATON is currently supported by a growing user and developer communities and has already been adopted by multiple public institutions and private companies within the national CHANGES and H2IOSC project. At the European level, ATON is actively used within three sister projects of ECHOES - StratiGraph, COLOURS, and PlaceMusXR - demonstrating its applicability and robustness across diverse research contexts.

TRACE (https://github.com/phoenixbf/trace) is designed to present a given 3D asset from local or online collections. It is designed to load the original glTF model with standard maps such as base colour, normal, roughness, and metalness, together with the associated Refinement Trace Maps, that are stored as separate files. The tool allows to interactively control such layers, enabling quick switching between materials visualisation and semantic layer (manual interventions encoded in Refinement Trace Maps). The Web3D tool can be configured to provide a selection of items, from local or remote collections (even in different server nodes) and as well provide XR modes (relying on ATON framework) to access and inspect an item in AR or using immersive VR devices.

However, storing the 3D models in a repository such as Zenodo, allows the semantic layer to be embedded directly within the model and ensures its accessibility independently of the TRACE tool, which operates solely as an advanced viewer mediating the visual exploration of that layer. TRACE, in fact, does not include an annotation system, and segmentation is therefore performed upstream, during the 3D model authoring phase

Figure 7 - Example of the TRACE tool tested on the 3D model of the taxidermied Himalayan Monal

Discussion

As already mentioned, a recent survey (Vigie, 2022) documented a wide variety of alternative approaches and the absence of shared standards throughout the entire tangible cultural heritage digitisation pipeline, including Computer Reconstruction methodologies for CH. In particular, there is an absence of a common cognitive/ontological model or established practices for transparently documenting processes and results, despite methodological proposals such as VRIM and CRMvr having been put forward. A fundamental prerequisite is the capacity to annotate models semantically and spatially, establishing a linkage between geometry and semantics through three-dimensional annotations referring to specific portions of the mesh, point cloud or HBIM model. It is evident that two-dimensional and three-dimensional platforms, such as Sketchfab, Voyager or ATON, demonstrate considerable potential in this respect. Nevertheless, these platforms remain contingent upon the quality of the models and the management of multi-representation data (Croce et al., 2020). The interoperability of annotations is still in the experimental phase: initial pipelines exist, but no established practices. In the field of HBIM, the application of these principles is more intuitive, as these systems are designed to associate properties with architectural elements. However, as they are derived directly from BIM, their application is mainly limited to built architecture. The object-centric logic facilitates the annotation of entire components (e.g. walls, floors), but is complex at the sub-part level, as in the case of localised degradation, which requires additional superimposed specialised objects, such as the adaptive elements (Croce et al., 2020) or the proxies deployed within the EM workflow (Demetrescu & Ferdani, 2021).

The pipeline proposed in this paper specifically addresses the critical need for transparency and traceability in the manipulation of 3D assets by retaining multiple stages of the modelling process (RAWp, DCHO, DCHOo) and introducing the Vertex Colour Map methodology. Rather than pursuing visual realism in a computer graphics sense, the pipeline prioritises visual credibility and documentary clarity by making authorial interventions explicit and comparable across versions. In a field where the pursuit of unqualified realism frequently undermines the pursuit of documentary accuracy, such clarity is of crucial importance. Moreover, the incorporation of paradata within real-time Web3D environments, as demonstrated by the TRACE tool, establishes a pragmatic model for semantic annotation and interactive exploration. By linking visual evidence to modelling decisions, the approach supports critical inspection rather than masking interpretative gaps, enabling users to assess both the strengths and the limitations of the resulting models.

A key point that emerged from this work concerns the comparison between the tracking-based approach adopted in the case studies and an alternative methodology already discussed and proposed in another paper (Ammirati et al. 2025), which is founded on a post-hoc, method-agnostic identification process. Unlike continuous tracking, this latter strategy does not rely on the systematic recording of all interventions but instead enables the retrospective detection of geometric modifications through a false-colour texturing technique.

The application of this generalised approach to case studies presenting similar acquisition challenges enabled the testing of its feasibility and validation. This dual perspective underscores its capacity to function independently of particular workflows or file formats, thereby mitigating the risks associated with inconsistencies or data loss. The generation of a Refinement Trace Map has been demonstrated to be a particularly effective method of differentiating between original and reconstructed areas, enhancing the transparency of the overall pipeline and improving the transparency of subsequent interventions.

The comparison suggests that the two methodologies should not be regarded as mutually exclusive, but rather as complementary. On the one hand, continuous tracking ensures accuracy and granularity in documenting each modelling operation; on the other, the post-hoc method provides flexibility and resilience, particularly valuable in contexts where complete tracking cannot be guaranteed. The integration of these approaches may therefore represent a promising avenue towards the establishment of more robust, transparent, and reproducible practices in 3D cultural heritage documentation.

It must be noted that current approaches, including those explored in this work, still rely on surface-level annotations (e.g., colour maps) and do not properly segment the mesh, thus lacking formal identifiers that would link the segments to external semantic structures. Even if the EM relies on a factual segmentation of the geometry, while our method is finalised on a semantic map applied to an undivided mesh, the Vertex Colour map would be suitable as well for a real segmentation of the model, thus setting the pre-conditions for a transposition of the EM method, which is developed for Virtual Archaeology, to the GLAM sector. Further challenges remain in standardising annotation practices. Future research should focus on developing automated segmentation and tagging techniques, while retaining human oversight to ensure the reliability of interpretative choices.

Conclusions

In the context of Spoke 4 of the CHANGES project, a digital twin pipeline was developed to meet the technical and qualitative requirements for dissemination-focused projects while also explicitly preserving the documentary value of the digitised assets, without compromising scientific rigour. The workflow prioritised the utilisation of open formats and software, was associated with a systematic metadata structure in accordance with FAIR principles, and explicitly encoded correction interventions within the models, thereby providing an embedded anchor for paradata. At the same time, he published digitised DCHOo models that have been demonstrated to achieve a high level of visual credibility and low memory consumption, with the additional advantage of fast Web3D loading.

This balance is achieved through geometry optimisation strategies that prioritise perceptual coherence and accessibility for dissemination contexts. The efficacy of this pipeline was successfully demonstrated using the Aldrovandi digital twin, which was developed within the framework of the CHANGES project.

The employment of Vertex Colour Maps and Refinement Trace Maps to ensure the monitoring of geometric integrations during the reconstruction phase facilitates the visualisation of all modifications applied, thereby ensuring scientific transparency and traceability throughout the entire process. Rather than resolving all documentation challenges, these tools make modelling decisions, interventions, and uncertainties explicitly visible, supporting critical evaluation and reuse.

Furthermore, enabling the semantic layer to be visualised on demand through the TRACE tool, with a controllable degree of intrusiveness over the textured model, made it possible to reconcile the achieved photorealism with the explicit interrogability of the model in relation to the digitisation process.

Future work will focus on strengthening the role of the Extended Matrix system as a framework for project-level gap analysis, rather than as a purely visual enhancement. While graduated false-colour maps can provide increased nuance beyond binary representations, their primary value lies in supporting the identification, classification, and communication of different types of gaps (e.g. missing data, interpretative additions, low-confidence areas), not in addressing the full range of unresolved challenges in heritage media documentation. In this regard, the use of the Extend Matrix tools formalized reconstructive hypotheses that reflect not just visual outcomes but the full interpretive process supporting the project.

Within this perspective, the further extension of the TRACE tool within the ATON framework is not intended solely to facilitate the visualisation of semantic maps, but to support structured project-level gap analysis by making modelling decisions, uncertainties, and data absences explicitly identifiable and comparable. In this sense, the proposed approach introduces a structured and transparent method for articulating limitations and decision points within 3D workflows, contributing to, but not exhausting, the broader methodological and epistemological discourse surrounding the documentation of complex cultural heritage assets.

Acknowledgements

We acknowledge the CHANGES Foundation and the University Museum Network of Bologna. We also extend our thanks to the cultural institutions that kindly lent some of the artifacts on display in the exhibition, including the Civic Archaeological Museum and the Civic Medieval Museum of Bologna.

Preprint version 3 of this article has been peer-reviewed and recommended by Peer Community In Archaeology (https://doi.org/10.24072/pci.archaeo.100689; Pantos, 2026)

Supplementary information

The three case studies discussed in this paper have been made publicly available on Zenodo at the following links:

Teriaca Vase - DCHO annd DCHOo: https://doi.org/10.5281/zenodo.17456480 (Sullini, 2025)

Dschagga woman bust - DCHO and DCHOo: https://doi.org/10.5281/zenodo.17431849 (Rega, 2025).

Glyptodon Jaw - DCHO and DCHOo: https://doi.org/10.5281/zenodo.17431894 (Fabbri, 2025).

Funding

This work has been partially funded by Project PE 0000020 CHANGES - CUP B53C22003780006, NRP Mission 4 Component 2 Investment 1.3, Funded by the European Union - NextGenerationEU.

Conflict of interest disclosure

The authors declare that they comply with the PCI rule of having no financial conflicts of interest in relation to the content of the article.

Credits

Authors’ contribution according to CRediT (https://credit.niso.org/):

Alice Bordignon: Methodology, Investigation, Writing – review & editing

Federica Collina: Methodology, Writing – review & editing

Francesca Fabbri: Methodology, Investigation, Writing – original draft, Writing – review & editing

Bruno Fanini: Data curation, Software, Writing – review & editing

Daniele Ferdani: Methodology, Writing – review & editing

Maria Felicia Rega: Supervision, Visualization, Methodology, Investigation, Writing – original draft, Writing – review & editing

Mattia Sullini: Supervision, Conceptualization, Writing – original draft, Writing – review & editing

CC-BY 4.0

CC-BY 4.0